Cybersecurity Journalist Profile Evaluation Criteria

Here’s something most PR teams and security vendors get wrong: they chase follower counts. They obsess over Twitter clout, podcast download numbers, guest appearances on panels. And they miss the journalists who actually move the needle—the ones whose reporting shapes policy, shifts stock prices, and defines public understanding of a breach.

Evaluating a cybersecurity journalist isn’t a soft skill. It’s a critical competency, especially in a world where, according to the Cybersecurity and Infrastructure Security Agency (CISA), ransomware attacks increased by 73% in 2023 alone. The wrong media relationship can amplify misinformation. The right one builds institutional credibility that no press release can buy.

As someone who’s spent years at the intersection of security communications and technology journalism, I’ll walk you through exactly what the evaluation criteria should look like—and what most frameworks completely miss.

What Are Cybersecurity Journalist Profile Evaluation Criteria?

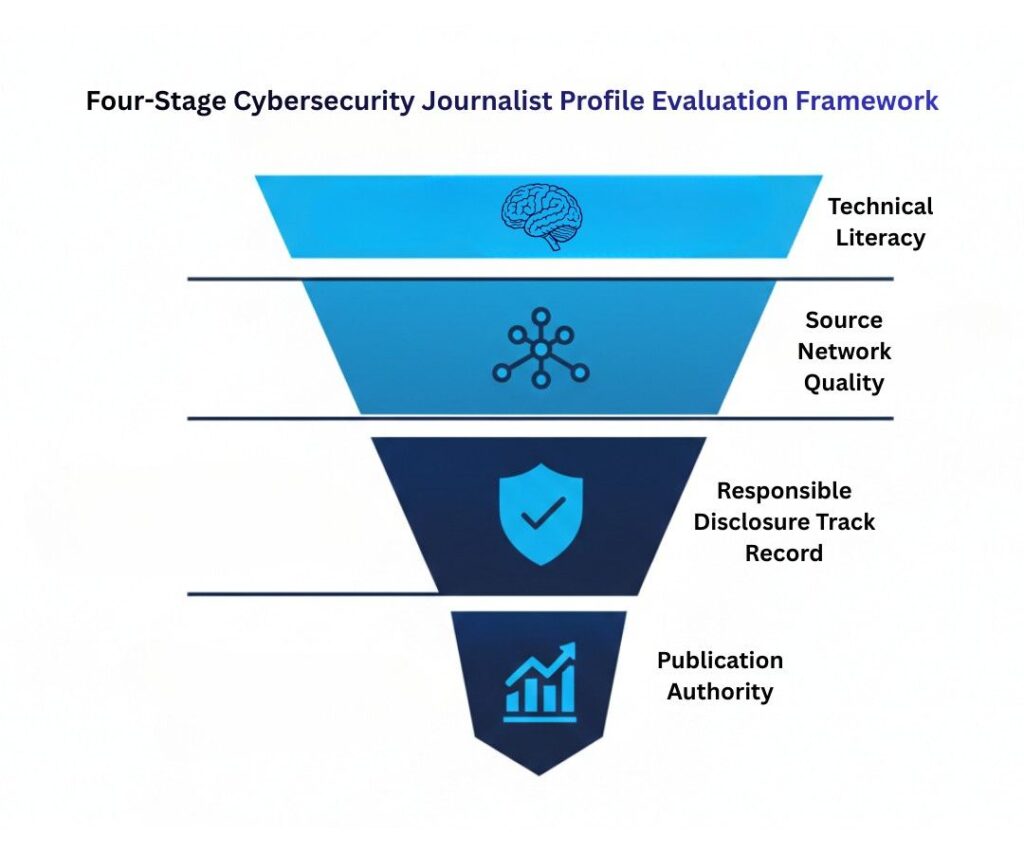

Cybersecurity journalist profile evaluation criteria are the specific benchmarks used to assess a security journalist’s expertise, credibility, accuracy, and editorial reach. They work by examining a journalist’s technical literacy, track record of responsible disclosure, source quality, and publication authority—going far beyond surface metrics like social following or article volume. Strong evaluation criteria separate journalists who genuinely understand threat landscapes from those who cover security as a beat of convenience.

Why Most Evaluation Frameworks Are Broken (And What's Actually at Stake)

The cybersecurity media space has exploded. And that’s… not entirely a good thing.

Between 2019 and 2024, the number of publications claiming “cybersecurity coverage” tripled, according to data from Muck Rack’s State of Journalism report. But technical accuracy hasn’t kept pace. A 2024 study from the Reuters Institute for the Study of Journalism found that roughly 38% of cybersecurity articles in mainstream publications contained at least one significant factual error—misidentifying attack vectors, conflating vulnerability types, or misattributing threat actors.

That’s terrifying if you’re a CISO trying to decide who gets early access to your incident response story.

The stakes go beyond reputation. Inaccurate coverage of a data breach can trigger regulatory scrutiny, affect investor relations, and—in severe cases—compromise ongoing law enforcement investigations. Brian Krebs, founder of KrebsOnSecurity and arguably the most respected independent cybersecurity journalist alive, has said in multiple interviews that a single poorly sourced story about an active intrusion can burn years of trust with intelligence contacts.

So what does a rigorous evaluation actually look like? Let’s build that framework.

The 4-Stage Framework for Evaluating a Cybersecurity Journalist's Profile

Stage 1: Technical Literacy Verification

This is where most evaluations start—and where most stop too soon. Technical literacy isn’t about whether a journalist can spell “zero-day.” It’s about whether they understand the implications of one.

Look for: accurate use of attack taxonomy (phishing vs. spear-phishing vs. whaling), correct attribution language (they say “threat actors believed to be affiliated with” rather than “hackers from Russia”), and whether they distinguish between vulnerabilities and exploits. A journalist who conflates CVE severity scores with actual exploitability risk is working from a surface-level playbook.

Check their archive. Seriously—go back 18 months and read five articles. Do they quote technical sources directly, or paraphrase in ways that introduce inaccuracies? Do they link to CVE databases, NIST advisories, or researcher writeups? That linking behavior alone is a strong signal.

Stage 2: Source Network Quality

Here’s the kicker most frameworks skip entirely: who a journalist talks to matters more than how often they publish.

Elite cybersecurity journalists have cultivated relationships with incident responders, threat intelligence analysts, vulnerability researchers, and law enforcement contacts—not just PR firms. You can infer this from their articles. Are they quoting named researchers at firms like Mandiant, CrowdStrike, or Recorded Future? Are they citing original research, or recycling vendor press releases?

Melissa Hathaway, former Cybersecurity Coordinator under two U.S. presidential administrations, put it plainly in a 2023 panel discussion: “The journalists I trust are the ones who call me to challenge my assumptions, not validate their headlines.”

That’s the bar.

Stage 3: Responsible Disclosure Track Record

This one’s non-negotiable. A cybersecurity journalist’s handling of pre-disclosure vulnerability information tells you everything about their editorial judgment.

Research their past: Have they published details that enabled copycat attacks? Have they coordinated with vendors on embargo timelines? The Cybersecurity Coalition’s responsible disclosure framework sets a clear industry standard. Journalists who consistently operate within it are partners. Those who don’t are liabilities.

Stage 4: Publication Authority and Audience Fit

Reach matters, but reach to whom matters more. A 500-word piece in Dark Reading hitting 80,000 CISOs is categorically different from a 2,000-word feature in a general tech publication with 4 million casual readers. Match the journalist’s audience profile to your communication objective.

(Insert suggested infographic: “Cybersecurity Media Outlet Tier Map by Audience Type—Practitioner vs. Executive vs. General Public”)

Cybersecurity Journalists vs. Tech Generalists—The Comparison Most People Get Wrong

Let’s bust a myth: publication prestige does not equal cybersecurity expertise.

Some of the most technically accurate cybersecurity journalism comes from independent researchers-turned-writers and niche outlets nobody outside the industry reads. Meanwhile, some high-profile tech mastheads routinely publish pieces that security practitioners mock in Slack channels.

The distinction breaks down like this:

Specialized cybersecurity journalists typically hold certifications (CISSP, CEH, or academic equivalents), cover the beat exclusively, maintain researcher relationships, and understand operational security well enough to protect sources. Their error rates on technical claims are significantly lower.

Tech generalists covering security often produce higher-volume, more accessible content—but at the cost of precision. They’re valuable for awareness campaigns targeting non-technical audiences. They’re dangerous for sensitive disclosures or nuanced threat actor attribution.

A practical test: search a journalist’s name alongside “correction” or “retraction.” It’s a blunt instrument, but a journalist with zero published corrections across 200+ security articles is either incredibly careful or incredibly unwilling to be held accountable. Neither extreme is reassuring—some corrections signal intellectual honesty.

(Suggested comparison table: Specialized Cybersecurity Press vs. General Tech Press—accuracy rate, source diversity, typical audience, embargo compliance history)

Who Benefits Most from Rigorous Journalist Evaluation Criteria

The obvious answer is PR and communications teams. But that’s only the beginning.

Security vendors evaluating media partnerships need criteria frameworks to avoid lending credibility to journalists whose coverage could misrepresent their products during vulnerability disclosures. A botched story about a patch can tank quarterly sales cycles.

CISOs and security leaders benefit when deciding who gets briefed during an active incident. Information shared with the wrong journalist—even under embargo—can surface prematurely.

Researchers and academics use journalist evaluation when deciding where to place their findings for maximum responsible impact. According to a 2024 survey by the SANS Institute, 67% of security researchers said they evaluate a journalist’s past disclosure handling before agreeing to an interview.

But here’s who this doesn’t work for: organizations seeking soft coverage with no accountability. If your goal is puff pieces, rigorous criteria will just frustrate you. This framework is for people who want accurate, credible, durable media relationships.

Expert Insight

Dr. Josephine Wolff, Associate Professor of Cybersecurity Policy at Tufts University’s Fletcher School and author of You’ll See This Message When It Is Too Late, has noted that the best security journalists share one trait: they understand that accuracy in this space has physical-world consequences. “A miscommunicated vulnerability disclosure,” she’s observed, “isn’t just a PR problem. It can affect critical infrastructure decisions.”

That framing changes how seriously you take evaluation criteria.

FAQs

Technical accuracy paired with responsible source handling. A journalist can be prolific and well-connected, but if their technical claims are sloppy or their disclosure practices inconsistent, they're a liability rather than an asset. Accuracy is the non-negotiable baseline everything else builds on.

Read their citations. Do they quote named researchers, reference original CVEs, link to NIST advisories, or cite incident response firms directly? Journalists with strong source networks name their sources and link to primary research. Heavy reliance on anonymous sourcing without institutional credibility is a yellow flag.

Mostly, no—at least not as a primary criterion. A journalist with 3,000 Twitter followers who's read by every CISO at a Fortune 500 matters more than one with 50,000 followers whose audience is casual tech enthusiasts. Audience composition beats raw reach.

Responsible disclosure is the practice of notifying vendors or affected parties before publishing vulnerability details publicly, giving them time to patch. A journalist who consistently follows this practice—coordinating with vendors, respecting embargo timelines—demonstrates both ethical judgment and the kind of source relationships that produce better reporting.

At minimum annually, but also after any significant editorial incident. Journalists change beats, outlets change editorial standards, and a reporter who was meticulous in 2022 might be operating under very different constraints in 2025. Treat it like any other vendor review.

Yes. Certifications are one signal, not a requirement. Some of the most respected cybersecurity journalists—including Brian Krebs—built their expertise through years of investigative reporting rather than formal credentials. What matters is demonstrated technical literacy in published work, not paper qualifications.

For practitioner audiences: Dark Reading, SC Magazine, The Record by Recorded Future, and Krebs on Security. For executive and policy audiences: Wired, The Wall Street Journal's cybersecurity coverage, and Politico's technology desk. Context matters—authoritative for whom depends on your communication goal.

Start with their archive: read five recent articles for technical accuracy. Search their name plus "correction" or "retraction." Check whether they link to primary sources. Look at who they quote. And if possible, ask trusted contacts in your industry network whether they've worked with this journalist and how that experience went. Reputation in the security community travels fast.

Conclusion

After years in this space, here’s what actually matters:

First: Technical accuracy isn’t optional—it’s the baseline. A journalist who can’t correctly describe a SQL injection attack has no business covering a breach.

Second: Source quality predicts reporting quality. The journalists worth working with have cultivated real relationships in the security community, not just PR contact lists.

Third: Responsible disclosure track record is your clearest signal of editorial judgment. Past behavior here predicts future behavior almost without exception.

Whether you’re a CISO managing a sensitive disclosure or a vendor evaluating a media partnership, cybersecurity journalist profile evaluation criteria aren’t bureaucratic overhead—they’re how you protect your organization’s credibility and, in some cases, actual operational security.

Start with the archive. Read five articles. Check the citations. The right journalists make themselves obvious.