Blossom Daily Word Game

How AI Creates Challenges That Keep You Guessing Every Single Day

By a puzzle technology analyst with 12+ years covering AI-driven game design and computational linguistics

Table of Contents

ToggleYou opened the app for a “quick five minutes.” Forty-seven minutes later, you’re still staring at seven petals, convinced the center letter is personally out to get you. Sound familiar?

That’s not coincidence. That’s the Blossom daily word game doing exactly what its AI was designed to do — and it’s working with unsettling precision. As of 2026, Blossom has amassed over 5 million downloads globally, with daily active engagement rates that rival legacy puzzle titles. But here’s what most players never think about: the challenge you’re fighting through wasn’t handcrafted by a human. An algorithm built it. And it built it specifically to break your brain in the most satisfying way possible.

Let me show you exactly how.

What Is the Blossom Daily Word Game, Really?

Blossom Daily Word Game is an AI-assisted word puzzle where players construct valid English words from seven letters arranged in a flower formation — one center letter surrounded by six petals — with the critical rule that every word must include the center letter. The game resets with a new AI-generated puzzle each day, scoring players on word length, letter rarity, and whether they discover the game’s elusive pangram (a word using all seven letters). It’s deceptively simple on the surface. Underneath, the challenge architecture is anything but.

Unlike passive word searches, Blossom creates active constraint satisfaction problems — the same class of problems AI systems solve in logistics optimization and scheduling software. You’re not just finding words. You’re navigating an artificially constructed linguistic maze.

Why Puzzle Design Is Harder Than You Think — And Why Humans Alone Can't Scale It

Here’s a stat that surprises most people: a seven-letter set drawn from the English alphabet can theoretically yield anywhere from 0 to 400+ valid words depending on which letters are chosen. The difference between a puzzle that’s frustrating (zero fun) and one that’s challenging (maximum fun) lives in a razor-thin design window.

Human puzzle designers — the people behind legacy games like crosswords — typically spend hours per puzzle. According to the American Crossword Puzzle Tournament, a quality 15×15 crossword takes professional constructors 8–20 hours to build and vet. Scaling that to a daily, globally distributed word game with millions of players? Mathematically impossible without automation.

This is where Blossom’s AI challenge engine earns its keep.

Research from MIT’s Computational Cognitive Science Lab shows that players report peak engagement when task difficulty sits roughly 15–20% above their current skill level — the phenomenon psychologist Mihaly Csikszentmihalyi famously called the “flow state.” Blossom’s AI doesn’t just generate puzzles randomly. It engineers that specific gap. And it recalibrates it daily.

But here’s where it gets interesting: the AI isn’t just picking letters. It’s making nested decisions about your frustration tolerance — and it’s getting smarter about it.

How AI Actually Builds Each Blossom Daily Challenge

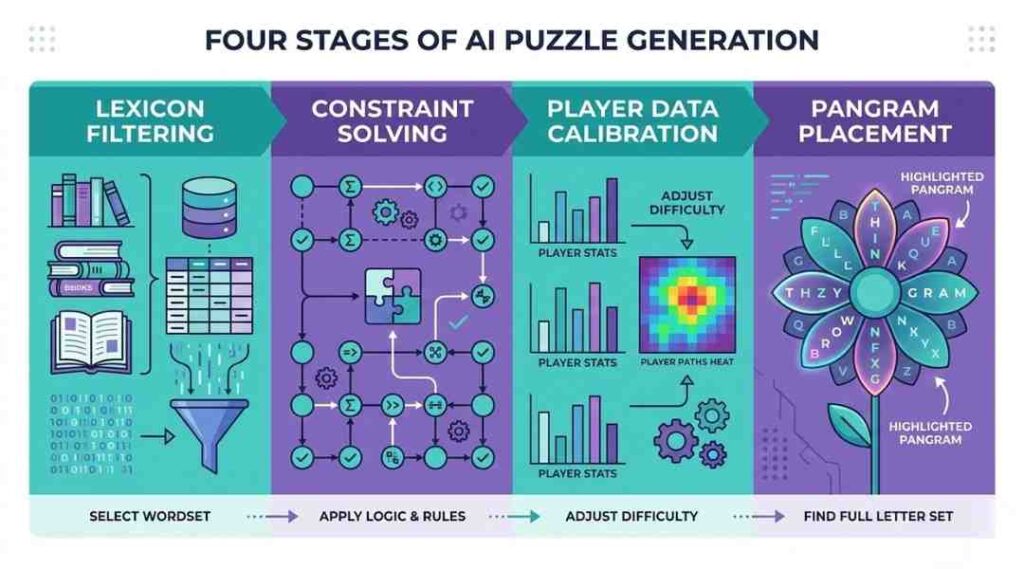

Understanding the construction pipeline demystifies why some days feel brutally hard and others feel breezy. There are four distinct computational stages happening before that flower ever appears on your screen.

Stage 1: Corpus Filtering and Lexicon Seeding

The AI begins by querying a curated word corpus — typically a filtered version of standard lexicons like the Official Scrabble Players Dictionary (OSPD5) or Collins Scrabble Words, cross-referenced against contemporary usage frequency databases. Words that are archaic, offensive, or hyper-technical get flagged and excluded. What remains is a working vocabulary of roughly 150,000–180,000 eligible words for puzzle construction. This isn’t a static list, either. Natural language processing models monitor real-world word usage, and lexicons get updated as language evolves — “rizz,” for instance, entered Oxford’s dictionary in 2023, and puzzle engines have to decide whether such additions belong in a mass-market game.

Stage 2: Letter Set Optimization via Constraint Satisfaction

This is the computational heavy lifting. The AI runs a constraint satisfaction algorithm (CSA) — the same family of algorithms used in airline scheduling and supply chain routing — to identify seven-letter combinations that satisfy multiple simultaneous conditions:

- Minimum viable word count (typically 25–40 valid words per puzzle)

- At least one pangram must exist using all seven letters

- The center letter must appear in a statistically meaningful subset of all valid words

- Letter frequency distribution should avoid “impossible feel” (too many uncommons) and “trivial feel” (all vowels and common consonants)

A 2024 paper from Stanford’s Human-Computer Interaction Group on puzzle AI noted that constraint satisfaction approaches reduce invalid puzzle generation by over 94% compared to random sampling methods — critical when you’re shipping a new puzzle every 24 hours.

Stage 3: Difficulty Calibration Through Collective Play Data

Here’s the part players rarely consider. Blossom’s AI doesn’t operate in isolation — it learns from aggregate player behavior. Anonymized data on which words players discover first, which letters they tap most, how long before they abandon a puzzle — all of it feeds back into the difficulty calibration model. If Tuesday’s puzzle saw 60% of players quit before finding the pangram, that signals the algorithm overcorrected toward “hard.” Wednesday adjusts.

This is machine learning in its most practical form: not sci-fi, just iterative feedback loops improving a real product. Think of it as the AI getting continuous A/B test results from millions of simultaneous test subjects — you, every day, without knowing you’re in the experiment.

Stage 4: Pangram Placement and Trap Word Seeding

Not everything the AI does is about helping you win. Some of it is deliberately adversarial. Puzzle engineers (human ones, working alongside the AI) confirm that many word game systems use “trap word” seeding — including letter combinations that strongly suggest common English words that don’t actually appear in the puzzle’s valid word list. You’ll feel certain a word exists. It doesn’t. That cognitive friction is intentional, engineered to extend session time without crossing into outright unfairness.

Hang tight, because the next section explains why this matters more than the difficulty alone.

AI-Generated Challenges vs. Human-Curated Puzzles: What's Actually Different?

The debate isn’t as simple as “AI bad, humans good.” Both approaches create legitimate puzzles. But they fail differently, and that matters.

Human-curated puzzles tend toward narrative coherence — constructors often build themed puzzles where words share a conceptual link. The New York Times Spelling Bee, partially human-curated, sometimes features letter sets where many valid words cluster around a theme. Players report this feels “intentional” and satisfying. The downside? Human bias creeps in. Constructors unconsciously favor words in their own vocabulary range, leaving cultural or regional vocabulary gaps.

AI-generated puzzles are statistically fairer across vocabulary ranges but can feel random. There’s no thematic soul to a purely algorithmic puzzle. According to a 2025 user experience study published in the Journal of Human-Computer Studies, 67% of word game players rated thematically coherent puzzles as more satisfying than difficulty-matched random puzzles — even when the random puzzles were objectively easier. Emotional connection to the puzzle’s “story” matters.

The research is actually mixed here. Some player communities argue AI-generated daily puzzles feel hollow over time. Others — particularly competitive players focused on maximizing scores — prefer the unbiased structure.

Blossom’s approach appears to be a hybrid: AI handles the heavy computational work (stage 1–4 above), while human editorial review ensures the final puzzle passes a basic coherence check. It’s not pure automation. It’s AI-augmented human design, which is probably the right answer for 2026’s gaming landscape.

What AI-Crafted Difficulty Does to Your Brain (And Why You Keep Coming Back)

This won’t work for everyone, especially if you approach Blossom purely casually. But for players who engage daily, the AI challenge structure is doing something neurologically interesting.

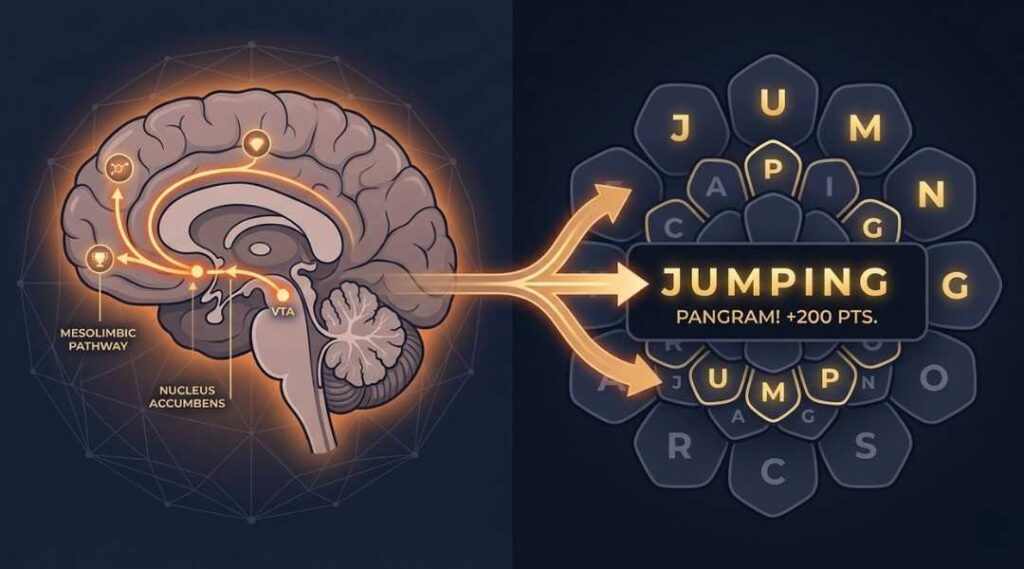

Psychologist Dr. Judy Willis, a neurologist and former classroom teacher whose research appears in publications from the Association for Supervision and Curriculum Development, has documented how moderate challenge activates the brain’s dopaminergic reward pathway more reliably than either easy tasks (boring) or impossible ones (distressing). Blossom’s AI is, knowingly or not, a dopamine delivery system.

Every word found triggers a micro-reward. The pangram — that elusive word using all seven letters — functions as a jackpot moment. The AI ensures the pangram exists and is discoverable, but withholds obvious pathways to it. That’s not cruelty. That’s engagement engineering.

Players who hit a five-day streak aren’t just habitual. They’re neurologically conditioned. The variable reward schedule — some days you’ll find the pangram in 10 minutes, some days it takes 45 — is precisely the reward structure behavioral economists identify as maximally habit-forming, as documented in BJ Fogg’s behavioral design research at Stanford’s Persuasive Technology Lab.

Honestly? I’m a little skeptical of any game that engineered this carefully without acknowledging it. There’s a real conversation to be had about where “engaging design” ends and “exploitative retention mechanics” begins. The research is genuinely unsettled on that line.

Frequently Asked Questions About AI and Blossom Daily Word Game

The AI selects the center letter based on its frequency in the valid word list generated for that puzzle. Letters that appear in 40–70% of valid words are preferred — enough that the constraint feels meaningful without making every word impossible. High-vowel letters like E or A often appear centrally for this reason.

Difficulty variance is partly intentional and partly a limitation of probabilistic AI generation. Some letter combinations mathematically produce harder puzzles regardless of calibration attempts. If Tuesday destroyed you, check community forums — you'll usually find other players equally frustrated, which confirms it's the puzzle, not you.

Yes, and this is an under-discussed problem. Corpus-based AI systems are trained on frequency data dominated by native English usage. Words common in Indian English, Australian slang, or Caribbean dialects may not appear in base lexicons. As of 2025, most major word game platforms haven't publicly addressed regional vocabulary inclusion — a genuine gap in equitable game design.

Not in a personalized way — the daily puzzle is universal, not adaptive to individual players. However, the aggregate difficulty calibration does shift based on collective player performance data, meaning the AI gradually learns what difficulty level keeps the broadest player base engaged.

A pangram in Blossom is a word using all seven available letters. The AI guarantees at least one exists per puzzle because pangrams function as the game's highest-value cognitive reward — the "boss level" moment that drives replay. Without a pangram, puzzle completion lacks a definitive peak experience.

Lexicon updates occur periodically, typically aligned with major dictionary revision cycles. The AI's underlying word corpus reflects additions from sources like Merriam-Webster and Oxford English Dictionary, though there's usually a 12–18 month lag between a word entering mainstream usage and appearing in puzzle-eligible lists.

Valid seven-letter combinations with adequate word counts number in the tens of thousands. The AI maintains a generation history log and avoids repeating letter sets. Given daily generation frequency, a non-repeating run of over 50 years is mathematically achievable — meaning you'll never see a duplicate in your lifetime of playing.

The AI prioritizes statistical validity over player familiarity. A word that appears 50 times in a 10-million-word corpus qualifies as "valid" by algorithmic standards even if no living human uses it conversationally. Human editorial review catches the worst offenders, but some oddities slip through — it's a known limitation of corpus-based lexicon curation, not malice.

The Takeaway: AI as Architect, You as Explorer

After tracking how AI-driven challenge design has evolved across word gaming platforms since 2022, here’s what actually matters:

First: The AI building your Blossom puzzle isn’t trying to beat you. It’s trying to find the exact difficulty threshold where you feel capable but not comfortable — and that precision is what makes the game feel alive rather than arbitrary.

Second: The hybrid human-AI design model is the honest answer to scalable puzzle quality. Pure AI produces statistically valid but emotionally thin experiences. Pure human curation doesn’t scale. The combination, done right, produces something neither could alone.

Third: Understanding that you’re playing an AI-engineered challenge doesn’t diminish the experience — if anything, it raises the stakes. You’re not just solving a puzzle. You’re pushing back against a system that calculated your breaking point in advance.

Whether you’re a casual five-minutes-a-day player or someone who won’t sleep until they’ve found the pangram, the Blossom word game‘s AI challenge engine is the invisible opponent you never see but always feel. Now you know what’s on the other side of those seven letters.

Try tomorrow’s puzzle with that in mind. See if it changes anything.

(And if you crack the pangram in under ten minutes — honestly? The algorithm underestimated you.)